上海信弘智能科技有限公司,信弘,智能,信弘智能科技,Elite Partner,Omniverse,智能科技,NVIDIA GPU,NVIDIA DGX, vGPU,TESLA,QUADRO,AI,AI培训,AI课程,人工智能,解决方案,DLI,Mellanox,IB, 深度学习,RTX,IT,ORACLE 数据库,ORACLE云服务,深度学习学院,bigdata,大数据,数据安全备份,鼎甲,高性能计算, 虚拟机,虚拟桌面,虚拟软件,硬件,软件,加速计算,HPC,超算,服务器,虚拟服务器,IT咨询,IT系统规划,应用实施,系统集成

NVIDIA CEO Jensen Huang's latest bylined article: "The Five-Layer 'Cake' of AI"

AI is one of the powerful forces shaping our world today. It's not merely a clever application, nor a single model, but infrastructure as essential as electricity and the internet.

AI runs on real hardware, real energy, and real economics. It transforms raw materials into intelligence at scale. Every company will apply it. Every country or region will develop it.

To understand why AI is evolving this way, we need to reason from first principles and see what has fundamentally changed in computing.

From Pre-Made Software to Real-Time Intelligence

Throughout the history of computing, software was typically made ahead of time. A human described an algorithm, and the computer carried it out. Data had to be carefully designed, stored in tables, and retrieved through precise queries. SQL became essential because that's how the world used to work.

AI breaks this pattern.

For the first time, we have computers that can understand unstructured information. They recognize images, read text, hear speech, and grasp meaning. They reason based on context and intent. Most importantly, they generate intelligence in real time.

Every response is created anew. Every answer depends on the context you provide. It's not software retrieving stored instructions—it's software reasoning on demand and generating intelligence.

Because intelligence is generated in real time, the entire computing architecture behind it must be redesigned.

AI as Infrastructure

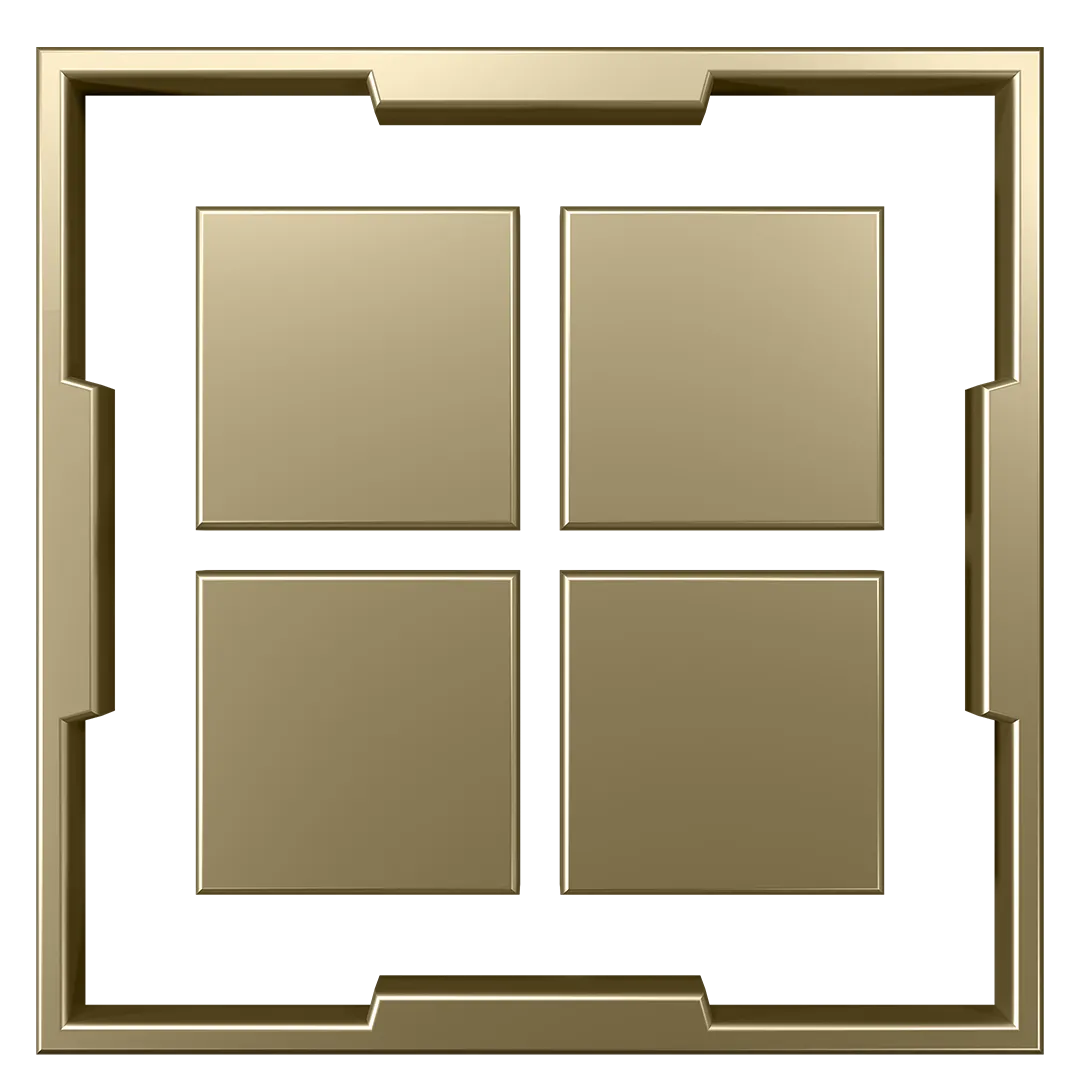

Looking at AI through an industrial lens, its structure breaks down into five layers.

At the very bottom is energy. Intelligence generated in real time requires power generated in real time. Every token generated is the result of electrons flowing, heat being managed, and energy converted into computation. Below this level, there are no abstractions. Energy is the first principle of AI infrastructure—the fundamental constraint on how much intelligence a system can produce.

Above energy comes the chip layer. These are processors designed to turn energy into computation efficiently, at scale. AI workloads demand massive parallelism, high-bandwidth memory, and fast interconnects. Progress at the chip layer determines how fast AI scales and how affordable intelligence becomes.

Above chips lies the infrastructure layer. This includes land, power delivery, cooling systems, construction engineering, networking, and the systems that orchestrate thousands of processors into a single machine. These systems are AI factories. They aren't designed to store information—they're designed to manufacture intelligence.

Above infrastructure comes the model layer. AI models understand many types of information: language, biology, chemistry, physics, finance, medicine, and the physical world itself. Language models are just one category. Some of the most transformative work is happening in protein AI, chemistry AI, physical simulation, robotics, and autonomous systems.

At the top sits the application layer, where economic value is created. Drug discovery platforms. Industrial robotics. Legal assistants. Self-driving cars. An autonomous vehicle is an AI machine application. A humanoid robot is an AI embodied application. Same architecture, different outcomes.

That's the five-layer cake:

Energy → Chip → Infrastructure → Model → Application

Every successful application pulls through every layer beneath it, all the way down to the power plant keeping it running.

We're just getting started with this build-out. Hundreds of billions are already invested. Trillions more worth of infrastructure still needs to be built.

Look around the world, and you'll see chip fabs, computer assembly plants, and AI factories being constructed at an unprecedented scale. This is becoming the largest infrastructure buildout in human history.

The human capital required to support this buildout is immense. AI factories need electricians, plumbers, pipefitters, steel workers, network technicians, installers, and operators. These are skilled, well-paying jobs, and they are in short supply today. You don't need a PhD in computer science to be part of this transformation.

At the same time, AI is increasing productivity across the knowledge economy. Take radiology: AI can already help read scans, yet demand for radiologists continues to grow. This isn't a contradiction.

Radiologists care for patients—reading scans is just one part of their job. When AI handles more of the routine work, radiologists can focus on judgment, communication, and care. Hospitals become more productive, serve more patients, and hire more staff.

Productivity creates capacity. Capacity drives growth.

What changed in the past year?

Over the last year, AI crossed an important threshold. Model capability improved significantly, making large-scale deployment possible. Reasoning got better. Hallucinations came down. Ability to ground improved dramatically. For the first time, applications built on AI started generating real economic value.

Use cases in drug discovery, logistics, customer service, software development, and manufacturing have shown strong product-market fit. These applications create powerful pull-through demand for every layer beneath them.

Open models play a critical role here. The majority of models worldwide are freely available. Researchers, startups, enterprises, and countries rely on open models to participate in advanced AI. When open models reach the frontier, they don't just change software—they activate demand across the entire stack.

DeepSeek-R1 is a great example. By making a powerful reasoning model broadly available, it accelerated adoption at the application layer and pulled through increased demand for training, infrastructure, chips, and energy beneath it.

Core Essence

When you view AI as essential infrastructure, the implications become clear.

AI started with the Transformer and large language models. But it never stopped there. This is an industrial transformation reshaping how energy is produced and consumed, how factories are built, how work is organized, and how economies grow.

AI factories are being built today because intelligence is now generated in real time. Chips are being redesigned because efficiency determines how fast intelligence scales. Energy is now central because it fundamentally limits how much intelligence can be produced. Applications are accelerating because models have crossed the threshold for deployment at scale.

Every layer reinforces the others.

That's why the buildout is so large. Why it touches so many industries at once. Why it won't be contained to one company, one country, or one sector. Every company will use it. Every country will develop it.

We're still early. Most of the infrastructure isn't built yet. Most of the workforce isn't trained yet. Most of the opportunity hasn't been discovered yet.

But the direction is set.

AI is becoming the infrastructure of the modern world. And the choices we make now—how fast we build, how broadly we participate, and how responsibly we deploy it—will determine where this era leads.